Step 1 – Ask an AI to draw you a perfect circle.

Try this. Open ChatGPT, Midjourney, Gemini, whichever one you prefer (although not Claude, because Claude doesn’t muck around with imagery). Type “draw a circle” and see what you get.

From a distance, it looks fine. Round. Convincing. Circle-shaped. But zoom in. Keep zooming. What you’ll find isn’t a circle at all. It’s an approximation, a mosaic of arcs and edges stitched together from thousands of circle-like fragments the model encountered during training. It learned what circles tend to look like by averaging millions of round-ish things, and it gave you its best composite.

It’s a Franken-circle.

The AI-generated circle looks like a circle…from a distance. Up close, it isn’t one.

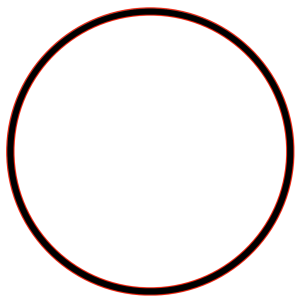

How imperfect? We pulled it into a graphics program and overlaid two mathematically perfect circles in red, one hugging the inside edge and one hugging the outside. If the AI’s circle were perfect, the black line would sit neatly between the red lines all the way around. It doesn’t. Look at the top, where the black drifts outside the red boundary. Look at the sides, where it pinches inward. The deviations are small, but they’re everywhere.

The AI-generated circle (black) overlaid with two mathematically perfect reference circles (red). The deviations are visible at every point along the curve.

A Better Circle

Step 2 – Now ask the same AI to “draw a circle using SVG.”

Depending on your AI, it’ll generate something like the bit of code below:

<circle cx="200" cy="200" r="80"/>…and probably draw it for you too:

Three attributes. One line of code. And it produces a circle that is mathematically perfect at every scale. Zoom in a thousand times and the curve is still flawless, because it isn’t built from pixels or fragments or statistical averages. It’s built from an equation: a center point, a radius, and the simple rule that every point on the circumference is exactly the same distance from the center.

The SVG circle is clean, consistent, and mathematically defined.

Same test. We overlaid the same two perfect red circles on top of the SVG output. This time, the black line and the red lines nest perfectly – no gaps. They sit on top of each other all the way around, because they are the same math.

The SVG circle (black) overlaid with the same two perfect reference circles (red). They are indistinguishable. Same math, same result.

Here’s one more thing worth noticing. The Franken-circle costs 1,350 tokens – an AI measurement of AI costs. The SVG circle… about 350 tokens. The imperfect version takes nearly four times the resources to describe something worse. There’s a whole article to be written about what that ratio means for the economics of AI (look for this soon), but for now just hold that thought: the inside-out approach isn’t only more accurate. It’s dramatically more efficient.

The first circle is assembled from the outside in: pieces of other circles, averaged and stitched. The SVG circle is generated from the inside out: a single principle, expressed mathematically, producing perfection at every scale.

The word for that difference is integrity. It comes from the Latin integer, meaning whole, complete, undivided.

This distinction matters more than you might think. Especially if you’re in the local news business.

The Outside-In Problem

Large language models are extraordinary machines. They can write poetry, summarize legal documents, translate between languages, and generate images that fool the human eye at a glance. They accomplish all of this through sophisticated pattern matching: given everything the model has seen, what is the statistically most likely next word, pixel, or token?

The math behind this process is elegant, but it’s also a LOT. Text (or circle-bits in this case) gets converted into high-dimensional vectors, which are mathematical points in a space with hundreds or thousands of dimensions, where proximity equals semantic (or shapely) similarity. “Doctor” and “hospital” and “health” end up near each other (mathematically) not because anyone told the model they’re related, but because they appear in similar contexts across billions of documents (or images). The model builds an enormous map of associations, and then navigates that map to generate outputs that feel coherent and relevant.

At the heart of all of this is a single equation called scaled dot-product attention:

Attention(Q, K, V) = softmax(QKᵀ / √d᮸)V

If that looks like a lot for one equation, it is. Q, K, and V represent Queries, Keys, and Values (roughly: “what am I looking for,” “what do I contain,” and “what did I just learn from analyzing Q and V”). The model multiplies them together, scales the result, and runs it through a softmax function to decide how much every piece of input should pay attention to every other piece. This single operation can run billions of times during your query ( ie the ‘inference’ step). And that’s after the tokenization and vectorization, and before the U-Net architecture that handles spatial relationships, the attention layer stacking, the iterative sampling steps, and the cross-attention mechanisms that connect the text prompt to the image generation. All of that machinery, to produce a very imperfect circle.

Now compare that to the math that produces the SVG circle:

x² + y² = r²

One equation – run once! No matrices. No softmax. No iteration. Just a center point and a radius, and every point on the circumference falls into place. The first approach throws enormous computational power at the problem and gets close. The second applies a principle and gets it right.

But here’s the thing about semantic maps built from the outside in: they’re only as good as the territory they surveyed. And they can only give you recombinations of what they’ve already seen.

When you ask an LLM to draw a circle, it doesn’t know what a circle is. It knows what circles have looked like in its training data. So it gives you its best impression: a statistical composite that’s convincing from a distance but falls apart under scrutiny. It’s the same reason AI-generated hands look ‘off’, and AI-generated text sometimes states “facts” that are confidently, articulately wrong. The model is interpolating between fragments, not generating from principle.

In image generation, this is mildly annoying. In news, it’s unethical, and in some cases could be libelous or even dangerous.

The Franken-Circle Problem in Journalism

When a newsroom asks AI to write a news story, the AI does exactly what it does with circles. It assembles fragments. Phrases from thousands of similar-sounding stories. Sentence structures that are statistically likely given the topic. Facts that tend to be true in a given context.

The result can read beautifully. It can be grammatically flawless, well-structured, and thoroughly plausible. But it can be subtly wrong in ways that are nearly impossible to catch without domain expertise, because the errors aren’t random. They’re statistically reasonable. They’re the kind of thing that could be true, especially from a distance, in a given story, even when they aren’t true in that story.

And the worst examples aren’t the obvious blunders. The worst is when we let AI draw conclusions.

Say your reporter attends a city council meeting and takes detailed notes. You feed those notes to an AI and ask it to write the story. The AI doesn’t just summarize what happened. It fills in context from thousands of other city council stories it’s trained on. It infers motivations. It draws connections between this vote and a budget shortfall that seems related but isn’t. It produces conclusions that sound informed and authoritative, but are actually pattern-matched from other towns, other councils, other years. Your readers can’t tell the difference. Your reporter might not either, if they’re in a hurry.

Or take something even more common in a small newsroom: photo and video editing. A reporter shoots a photo at a community event, but the lighting is bad or the framing is off. The temptation is to ask a generative AI to “fix” the image. The AI will happily oblige. It’ll brighten the shadows, maybe sharpen the faces, and add people to the background that were never there – all plausible. And that word, “plausible,” is the problem. It’s generating pixels that weren’t there. In a news photo. For a small newsroom that may not have a dedicated photo editor scrutinizing every output, this is a quietly corrosive habit.

It goes beyond the newsroom too. A local news operation is also a small business, and the same Frankenstein trap shows up in the back office. Ask your AI “what should our ad sales strategy be?” and it will give you a confident, articulate answer built from patterns across thousands of businesses that are not your business, in markets that are not your market, serving communities it knows nothing about. It sounds smart. It might even sound right. But it’s the Frankenstein circle again: an approximation assembled from the outside in, and it’s going to deviate from reality in ways you won’t spot until you’ve already acted on it.

If you’ve ever read AI-generated text and felt something was slightly off, a vague unease, a sense that the words were right but the meaning was hollow, you’ve experienced something similar to what robotics researchers call the “uncanny valley.” That eerie feeling when something looks almost human but isn’t quite. In this case, it’s not a face that’s wrong. It’s the integrity of the information. It looks whole from a distance. Zoom in and it’s fragments.

For an industry whose entire value proposition is trustworthy information, this is an existential problem.

The Inside-Out Alternative

Here’s what most of the “AI in journalism” conversation misses: there’s another way to use AI.

And let’s be honest about the stakes. For small newsrooms running on skeleton crews, generative AI is a once-in-a-generation force multiplier. It would be negligent to ignore it. The question isn’t whether to use it. The question is how to use it without compromising the thing that makes your local news room worth reading.

Go back to our circles. The AI-generated circle failed because we asked the AI to produce the output directly, to be the artist. But when we asked the AI to write SVG code, the output was perfect. Not because the AI understood circles any better, but because it generated instructions for a system that does. The AI wrote code. The code used a mathematical equation. The equation produced a circle with integrity… from the inside out.

This is not a trivial distinction. It’s the entire strategy.

A newsroom shouldn’t ask AI to write stories or draw conclusions or fix photos. It should ask AI to operate the tools that help journalists do those things better and faster. The difference is everything:

Outside-in (Franken-circle) AI in news:

-

-

AI writes a city council story from your reporter’s notes, filling in context and conclusions from thousands of other councils in other towns

-

-

-

AI “touches up” a news photo using generative fill, inventing pixels (people! license plates!) that weren’t actually captured by the camera

-

-

-

AI acts as your CRM, generating sales advice and customer strategies based on patterns from businesses nothing like yours

-

-

-

AI does your budget math, producing numbers that are statistically plausible but may not actually add up

-

Inside-out (Integrity) AI in news:

-

-

AI transcribes the actual audio from the city council meeting using speech-to-text algorithms: bounded, verifiable, deterministic. Your reporter draws the conclusions.

-

-

-

AI runs ffmpeg, ImageMagick, or Photoshop to adjust exposure, crop, and color-correct using the actual pixel data from the actual photograph. No generated pixels. No invented content.

-

-

-

AI interacts with your CRM (Salesforce, HubSpot, whatever you use), running queries, pulling reports, segmenting contacts, and surfacing patterns in your data using the CRM’s own algorithms. You make the strategic calls.

-

-

-

AI runs your spreadsheet, executing formulas in Excel or Google Sheets with real arithmetic that is verifiable to the penny. You read the results.

-

In every inside-out case, the AI is operating within a system that has its own integrity, its own center point and radius. The AI isn’t generating the truth. It’s helping deliver it.

Why This Matters Now

We’re launching this article on April 9, 2026, the first-ever National Local News Day. It’s a day dedicated to reconnecting Americans with the local outlets that keep our communities informed, our leaders accountable, and our neighbors connected.

Nearly 3,500 U.S. newspapers have disappeared since 2005. More than 270,000 journalism jobs have been lost. Over 200 counties in America have zero local news outlets. Fifty-five million Americans have limited or no access to local reporting.

In the middle of this crisis, AI has arrived. And the question every local news organization faces is: how do we use this?

The temptation is obvious. Use AI to do more with less. Let it write stories, generate content, fill the gaps left by shrinking newsrooms. That’s the outside-in approach. It’s fast, it’s cheap, and it produces something that looks like journalism from a distance.

But zoom in, and it’s a Franken-circle.

The alternative takes more thought but produces something real. Use AI to make your journalists more efficient, not to replace them. Use it to transcribe, distribute, match, tag, optimize, analyze. All the mechanical work that has nothing to do with truth-telling and everything to do with logistics. Let AI be the vector tool, not the artist.

Build it from the inside out. Start with what’s real, the community, the facts, the actual events, and use AI to extend the reach and efficiency of the people whose job it is to report on them.

That’s what we’re building at Local NEWS Network. Not AI that writes stories, but AI that helps local newsrooms get their stories to the people who need them. The tools have integrity because they start from a center point, the community, and work outward, governed by mathematics we can verify and logic we can audit.

The Test You Can Run Yourself

We started this piece with a simple experiment, and it’s one you can replicate in about two minutes:

Method 1: Ask AI to generate a circle image. Open an AI image generator. Prompt: “A perfect black circle on a white background.” Save the image. Zoom in at the edge. Notice the imperfections, the aliasing, the wobble, the subtle asymmetry…and it’s probably an oval if you measure it.

Method 2: Ask AI to write circle code. Open the same AI chat (or Claude, since Claude loves code). Prompt: “Write SVG code for a perfect circle.” Copy the code into an HTML file and open it in a browser. Zoom in as far as you want. The circle is mathematically perfect.

Method 3: Write the code yourself. Open a text editor. Type . Open it in a browser. Also perfect.

Methods 2 and 3 produce identical results. The AI in Method 2 didn’t need to understand circles. It just needed to write instructions for a system that does. And that’s the whole point.

When AI operates tools that have integrity, the output has integrity. When AI generates the output directly, you get an impressive approximation that works at a distance and falls apart up close.

For a news organization, “works at a distance and falls apart up close” is not an acceptable standard. Our communities deserve the Real Circle.

Local NEWS Network is launching its new platform on National Local News Day, April 9, 2026. We’re building technology that helps local newsrooms thrive, from the inside out. Learn more at thelocalnews.us.

This is the first article in a series exploring the intersection of technology, humanity, and community. Next up: “The Map and the Territory,” the mathematics of how search engines find things, and what it means for local news in the age of AI-powered search.

A note on how this article was made: Yes, I used AI to help write this. But I did exactly what I described above. I developed the concepts, ran the circle tests, built the comparison graphics, checked the math, and edited every paragraph. The AI operated the tools. I took responsibility for the final product. The result: an article that would have taken me weeks to write (and polish) took days instead. That’s the force multiplier…with integrity. That’s the point.